- Insight

- Wednesday 27th April 2022

CDM myth #3: a solution looking for a problem

For my next instalment on the Common Domain Model (CDM) myth-busting series, I wanted to respond to one of the most frequent criticisms being levelled at it. For a primer on CDM, please check my first post on the topic.

Recalling the goal of that blog series, which is to uncover some fundamental truths about the CDM by dispelling some myth, there is probably no better way to do it than to respect and answer criticisms. They often reflect a market perception that cannot just be brushed aside.

The one that I hear most often can be summarised as this: “The CDM is a solution looking for a problem.”

As in earlier posts, I like to go back to the source, i.e. ISDA's White paper on the future of derivatives processing, where the idea of the CDM first emerged. In this paper from 2016, ISDA laid out a starkly clear diagnosis of the current state of the trade processing landscape, which had grown incredibly brittle and inefficient. Before the CDM development even started in 2018, an industry working group estimated the cost savings that its widespread implementation in post-trade processing could generate at around $3-4BN.

Not making the CDM a solution to a specific problem is what allows it to tackle the inefficiencies it was designed to unlock.

Accurate or not, that’s not the point. The sheer scale of the problem demanded a fresh approach, and the remedy put forward was based on common-sense principles of standardisation and collaboration. My point is this: the CDM has always been the analysis of a problem before being the outline of a solution.

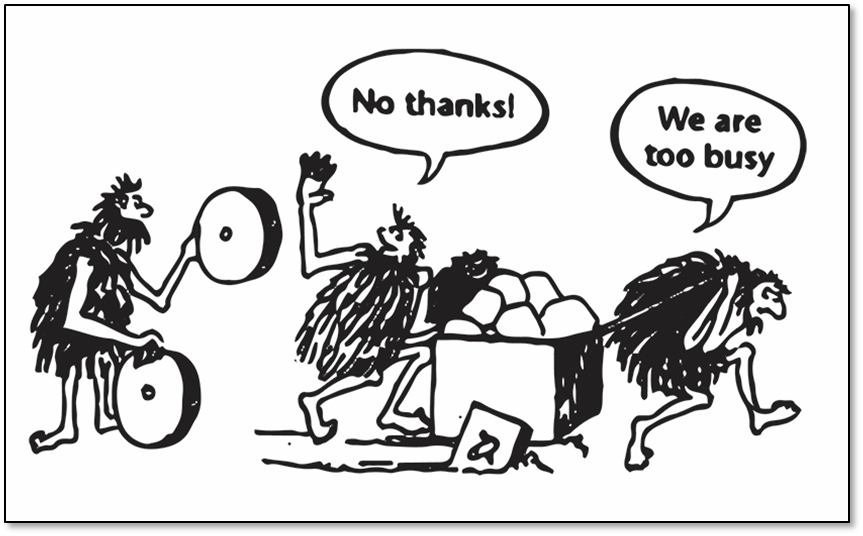

Since then, unless I’ve missed a headline, there hasn’t been any market, regulatory or technological development that could have contributed to fundamentally improving that assessment or changing its recommendations. If you work for a financial institution that does not suffer any longer from any of these trade processing inefficiencies, you need not read any further – you do not have any problem that the CDM can solve – lucky you!

But if you acknowledge that the problem persists, then the question is: does the CDM solve it?

This question warrants a finer-grained analysis of the inefficiencies’ root cause. Look closely at the trade processing landscape and you will find solutions at every point in the trade lifecycle, from matching to reporting to collateral management, some of them widely adopted by the industry. Indeed in most cases, you will be able to choose between many available solutions.

But the diagnosis is not about a lack of solutions – it’s about a lack of inter-operability. To quote the white paper: “The lack of a coordinated approach with clearly defined, unbiased objectives is at the heart of these challenges, as the industry’s limited resources are not always focused on developing and delivering common solutions.”

Keeping the CDM as a genuine common denominator is key to ensuring its status as a pivot between different trade processes.

So in fact, the last thing that the world needs right now is yet another trade processing solution (in the same way as it doesn’t need another standard, as I argued in my earlier post). The industry has an in-betweener type of problem that can only be solved by an in-betweener type of approach. That is precisely why the CDM is not a solution – and never should be.

Let me explain. Assume that the CDM was geared towards solving a specific pain point in the trade lifecycle – take matching and confirmation for example. Rapidly, the model would be customised to that specific use-case and its restricted set of stakeholders (execution venues, banks’ middle-office staff, to name a few), rather than adhering to holistic principles.

Why bother with a composable product model (harder to design) if I can simply drive all my trade execution flow by declaring a product type upfront? Well… It matters for trade reporting, if we then have to specialise the reporting logic – e.g. think of the challenge of reporting the “price” attribute – based on potentially many, many different product types. But if the reporting crowd is not in the room, who cares?

Before we know it, the CDM would have replicated the issue that current solutions suffer from, i.e. the lack of inter-operability between them at different points in the trade lifecycle.

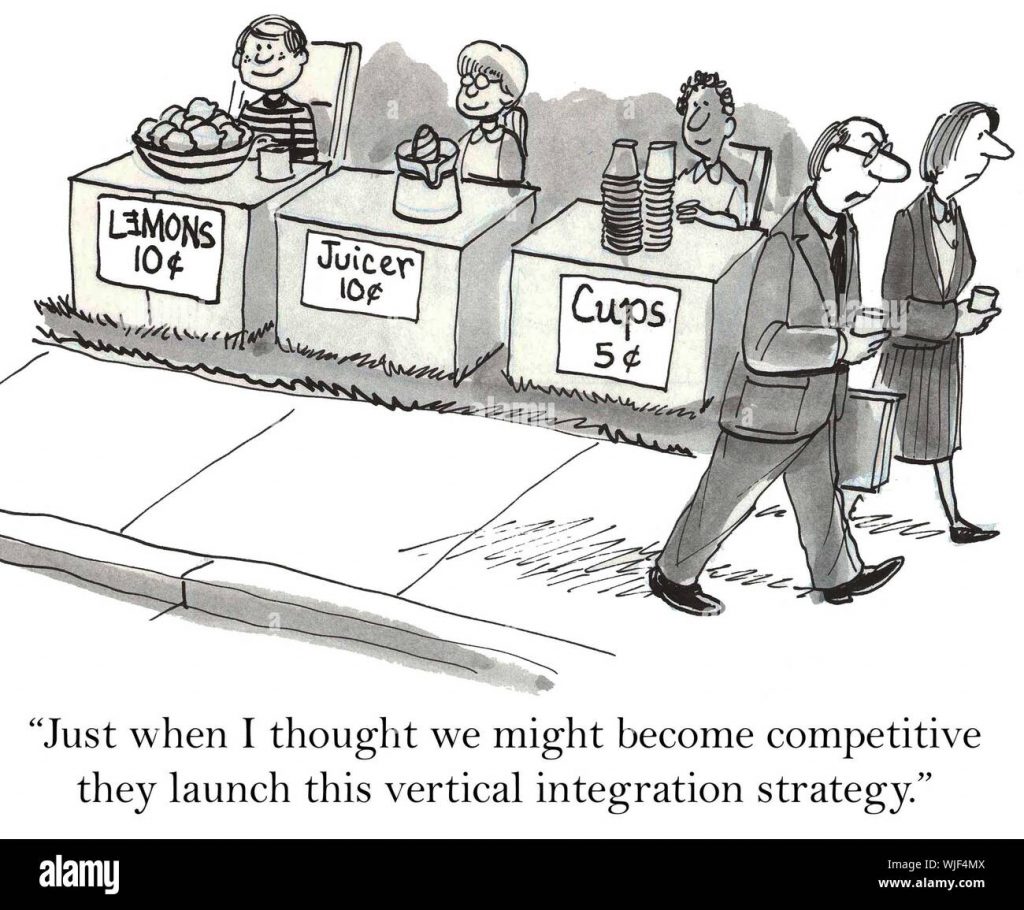

There is a natural response to this issue, though. It is for the provider of said solution gradually to extend its product offering to cover other parts of the trade lifecycle. In the example above: offering reporting services too. Hence the tendency, in the way the market is currently structured, towards vertically integrated solutions – or “platforms”, as they’re often called (they’re anything but). But this narrows end users’ choice: there certainly isn’t one that is best at doing everything.

I often call this reduction of competition: “lock-up by API”. Here is a typical scenario. You have just successfully integrated vendor X in your architecture, after months (if not more) of painstaking work. A little while later, another vendor Y emerges that provides the same solution, only better, faster and cheaper. Your response to Y’s sales pitch? “Sorry, we’d really love to use your product, but we can’t be bothered going through that integration all over again…” In fact, even if the price-quality ratio of X’s service degrades over time, you’re still kind of stuck. Sounds familiar?

It’s more accurate to think of the CDM as a library distributed in multiple languages and accompanied by visual representations.

Again the answer to this problem was clearly laid-out in the white paper: the industry needs to develop common foundations for “the process, behaviours and data elements” of the trade lifecycle. Such foundations should be solution-agnostic but “once agreed, these processes should be technically encoded as common domain models (CDMs)”. This is how the CDM was born.

That the CDM is “coded” probably explains why it’s often mistaken for a “solution”, based on the confusion that code = system = solution. In fact, it’s more accurate to think of the CDM as a library (in programming-speak) distributed in multiple languages and accompanied by visual representations. That code library is directly usable in solution implementations, but critically remains independent of any particular solution or system (see my previous post that addresses this point).

ISDA’s on-going Digital Regulatory Reporting (DRR) programme provides the best illustration of this separation in action. DRR delivers a standardised, coded interpretation of the trade reporting rules using CDM-based data to represent the transaction inputs.

Firstly, DRR is only an application of the CDM – it doesn’t leak into the CDM so the latter remains free of any reporting perspective. For instance, the enrichment of transaction inputs with static referential data, often jurisdiction-specific, is part of DRR but not of the CDM. Keeping the CDM as a genuine common denominator is key to ensuring its status as a pivot between different trade processes without being encumbered by any in particular.

And secondly, DRR is distributed as an executable code library just like the CDM is. DRR is not a compliance solution but it ensures that the market applies reporting rules consistently regardless of the specifics of any implementation (internal or vendor-provided).

The last thing that the world needs right now is yet another trade processing solution.

In summary, not making the CDM a solution to a specific problem is what allows it to tackle the inefficiencies it was designed to unlock in the first place.

Please get in touch with REGnosys and see how we can help you benefit from the CDM or DRR.